If you’re setting a generative AI strategy for your company without having used the tools yourself, you’re making decisions through a secondhand lens. The leaders who will get this right are the ones willing to eat their own dog food — and the experience changes what you believe is possible.

I’m not a software engineer — but I built a production application anyway

I’ve spent most of my career leading technology, product, and commercial transformations. I understand architecture, and I can read code, but I haven’t built production software myself in years. My role has always been to direct the work, not write it.

A small business I work with has been trying to replace a 30-year-old database system I wrote that has long outlived its architecture. After 3 different attempts, including offshoring, open source, and low code, over the years, I decided to experiment with a generative AI coding tool and see how far I could get on my own. What started as a weekend experiment turned into a full production application — new workflows, integrations, multi-tenant architecture, and a modern interface. It’s now used daily and materially more capable than the system it replaced.

The biggest surprise wasn’t speed — it was scope

AI has been part of the technology landscape for decades — machine learning, natural language processing, and predictive analytics are mature disciplines. What’s genuinely new is generative AI’s ability to produce high-quality working code, and the impact of that on what a non-engineer can realistically build.

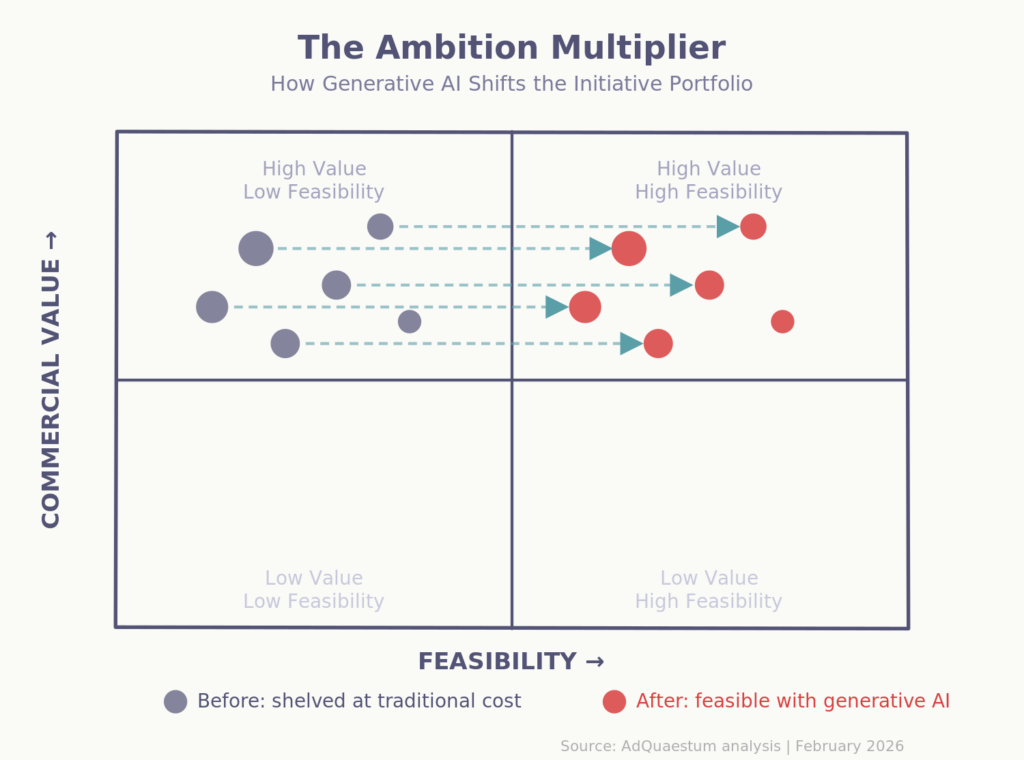

The gap between “I know exactly what this needs to do” and “I can actually make it do that” shrank dramatically. Features I wouldn’t have seriously considered under traditional economics — custom integrations, automated workflows, a complete UI overhaul — suddenly felt entirely reasonable to attempt. I didn’t just rebuild what existed. I tripled the functionality because the cost of ambition dropped to nearly zero.

The differentiator isn’t coding skill — it’s clarity of thought

What became clear quickly is that the limiting factor with these tools isn’t technical expertise. It’s the ability to describe what you want precisely, assess whether the output is right, and redirect when it isn’t. That’s not an engineering skill. That’s a leadership skill — the same skill that makes someone effective at directing a team, running a product review, or writing a clear brief.

This is why I believe the “eat your own dog food” principle matters here. When a CEO or operating partner sits down with a generative AI tool and works through a real problem — even a small one — they develop an intuition for what’s possible that no briefing document can replicate. They understand the feedback loop, the iteration speed, and where the tools genuinely struggle versus where they excel.

You can’t set a credible AI strategy from the sidelines

There’s a growing gap between leaders who have firsthand experience with generative AI tools and those relying on vendor presentations, analyst reports, and secondhand accounts from their teams. Both groups are making AI investment decisions. But they’re operating with very different levels of judgment.

The leader who has spent a weekend building something — even something simple — understands viscerally what generative AI can and can’t do. They know where it accelerates and where it hallucinates. They can challenge their CTO’s roadmap from a position of informed experience rather than delegated trust. That credibility carries from the boardroom to the engineering floor.

The strategic risk is leading AI transformation without personal conviction

Every company is developing an AI strategy. Many of those strategies are being set by people who haven’t personally used generative AI tools for anything more demanding than drafting an email. That creates a specific kind of risk: strategic direction shaped by abstraction rather than experience.

AI — in its broadest sense — isn’t new. Companies have been deploying machine learning models, recommendation engines, and automated decisioning for years. What’s new is that generative AI has fundamentally changed the build-versus-buy calculus. The question is no longer just “should we invest in AI?” It’s “what can we now build that we couldn’t before?” — and answering that question well requires understanding the tools firsthand.

Spend a weekend building something — it will recalibrate your judgment

I’d encourage any leader who influences technology investment to try it. Not because you need to become a developer — you don’t. But because the experience recalibrates your judgment in ways that matter. You’ll develop an opinion on where generative AI tools add genuine value versus where they generate plausible-looking output that falls apart under scrutiny. You’ll understand the difference between AI as a productivity tool and AI as a capability multiplier. And you’ll have the credibility to lead the conversation rather than delegate it.

The barrier between idea and execution is lower than most leaders realize. The ones who discover that firsthand will set better strategies, ask better questions, and make better investment decisions. The ones who don’t will rely on others’ judgment about the most consequential technology shift in a generation.

Generative AI doesn’t just change what your company can build. It changes what you personally can build. Leaders who experience that shift firsthand will lead the AI conversation with conviction. Those who don’t will be following someone else’s playbook.